I’ve searched this long and hard and I haven’t really seen a good consensus that made sense. The SEO is really slowing me on this one, stuff like “restic backup database” gets me garbage.

I’ve got databases in docker containers in LXC containers, but that shouldn’t matter (I think).

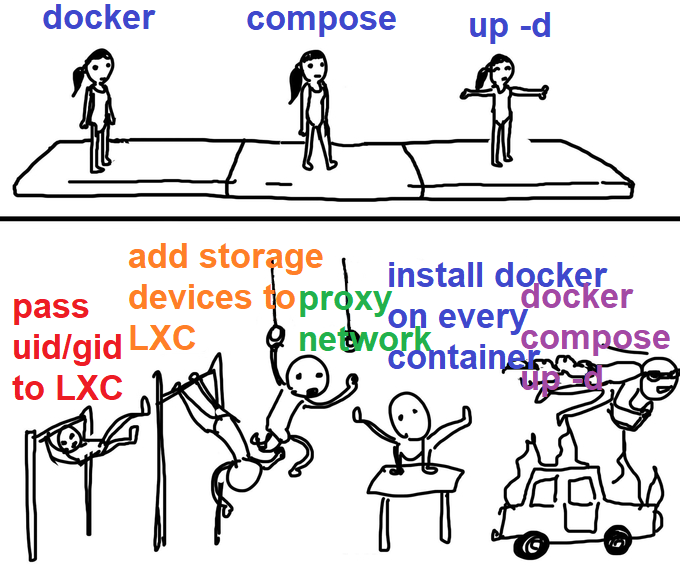

me-me about containers in containers

I’ve seen:

- Just backup the databases like everything else, they’re “transactional” so it’s cool

- Some extra docker image to load in with everything else that shuts down the databases in docker so they can be backed up

- Shut down all database containers while the backup happens

- A long ass backup script that shuts down containers, backs them up, and then moves to the next in the script

- Some mythical mentions of “database should have a command to do a live snapshot, git gud”

None seem turnkey except for the first, but since so many other options exist I have a feeling the first option isn’t something you can rest easy with.

I’d like to minimize backup down times obviously, like what if the backup for whatever reason takes a long time? I’d denial of service myself trying to backup my service.

I’d also like to avoid a “long ass backup script” cause autorestic/borgmatic seem so nice to use. I could, but I’d be sad.

So, what do y’all do to backup docker databases with backup programs like Borg/Restic?

I guess the trouble is that you don’t want to read the volumes where the db files are because they’re not guaranteed to be consistent at a given point in time right?

Does the given engine support a backup method/utility that can be used to copy files to some volume on a set schedule?

As far as I know (unless smarter people know), you need a “long ass backup script” to make your own fun on a set schedule. Autorestic and borgmatic are smooth but don’t seem to have the granularity to deal with it. (Unless smarter people know how to make them do, which I may be fishing for lol)